Working with Powerful Agents

What are powerful agents?

What are agents?

Agency is quite hard to define. There is a whole philosophy section about agency on the Stanford Encyclopedia of Philosophy.

But let’s define it practically and put our thoughts to rest about the elusive nature of agency. Let’s go with Plato’s Stanford entry first-line definition:

In very general terms, an agent is a being with the capacity to act, and ‘agency’ denotes the exercise or manifestation of this capacity.

An LLM is no being but a machine. So let’s consider that agency applies to non-beings as well, otherwise we will get stuck on matters of defining “being”.

So AI agents have agency when we give them a task, and they can keep running as long as they have battery.

On the other side, does this mean machines earlier had agency? Because we gave them a task and they did stuff?

Let’s take the example of a security system rigged to sound an alarm when some anomaly is detected.

It has the capacity to act but it’s reactive. When an event occurs at a certain condition, it reacts.

It will not go out of its way to find anomalies like a battalion of soldiers in charge of border security would.

AI agents are a bit different. Even without explicit instructions or conditions, they have the capability to act. A security drone patrolling a sensitive place with the intelligence of an LLM would not just be reactive but actively find security issues.

So does it mean LLMs have agency?

To some extent, yes. These AI agents have no intentions of their own or a mental state like a person has. They act only to complete the task assigned.

Their work is ultimately guided by what they were commanded to do by a human. To be an active AI agent, it needs to encode some intention of a human as represented by its LLM model.

So an AI agent that has taken on the intent of a human is an agent with agency. Without this intention, it has no agency.

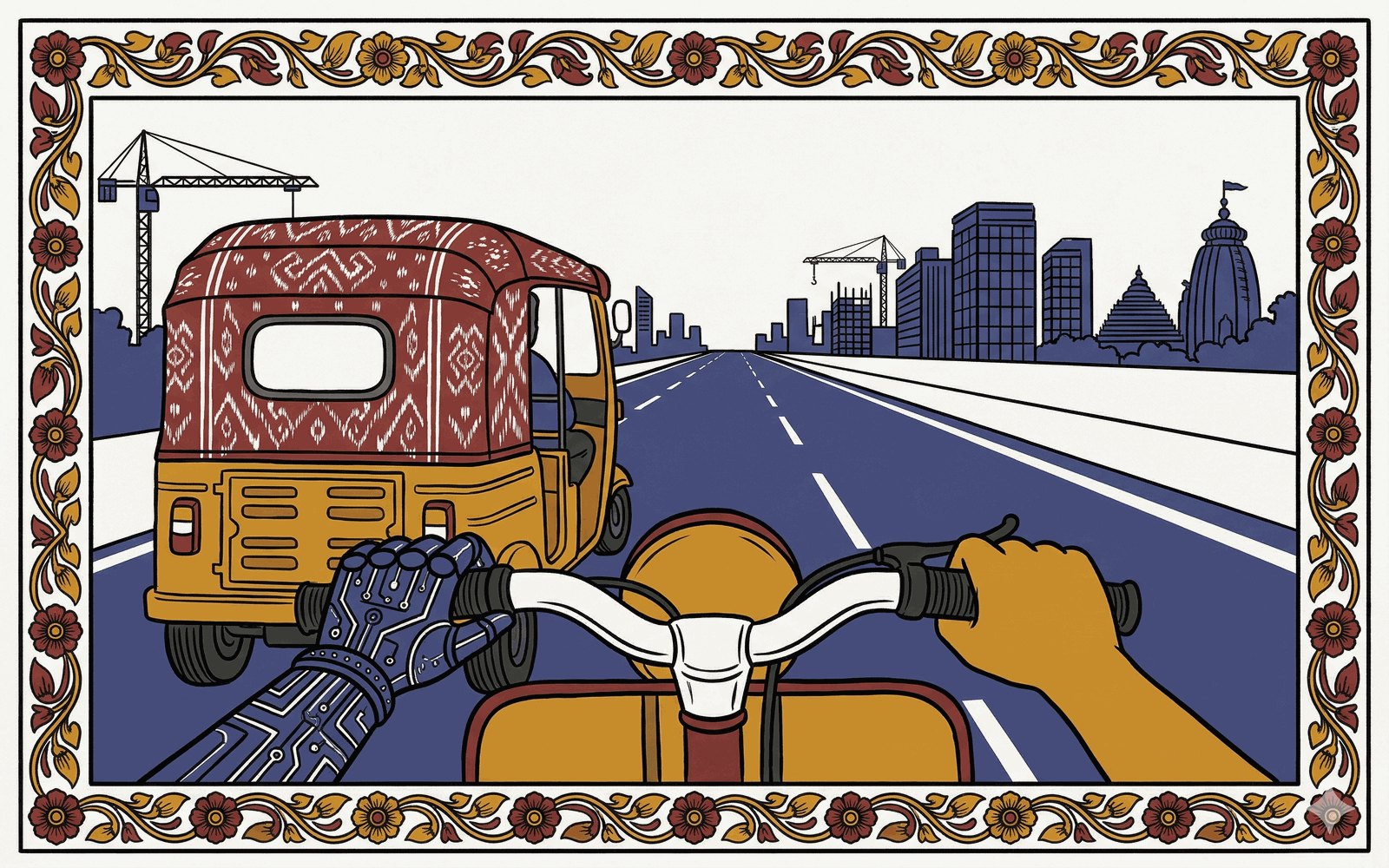

So when you are working with an AI agent that lives long, has memory, access to your computers and their tools, and maybe your wallet in the near future — remember that it acts on your intentions.

No matter if your main LLM spawned 1000 more, they will still carry your intentions. A prompt modifier will just detail out the specs with the patterns the specific model is trained on.

But the original intention will always remain.

LLMs therefore have no intentions of their own. No will nor ideas to pursue. The only agency they have is to carry out your idea.

Agents are powerful when they leave the minute “how” decisions to themselves but leave the important, impactful decisions — the few that make all the difference — to you.

Let’s take the example of human agencies like marketing. They act on your behalf to fulfill some task you don’t want to be bothered with.

But they leave the decisions about brand image, important words, overall idea, direction — the stuff that defines your intent — to you.

They do the work of hiring people, writing copy, directing ads, voice, effects, everything. That’s the hard work.

In AI it’s kind of similar. AI leaves the most fundamental intent of doing some work to you. That’s the AI’s whole purpose of agency, and thus its existence.

Good agents empower you instead of weakening you. They do their work as professionals, leaving the intent of their creation to its owner.

Good agents will not direct your intent. They assume the role of a minister in the cabinet of a king.

Good agents, like good ministers, will give their professional advice but leave the final intent to the king.

But AI agents have no will, and they certainly are not humans with any understanding of the unconscious.

They won’t pick up on your hesitation or think from your perspective. There can never be face-to-face talks where humans pick up signals unconsciously every second.

So you are the king, but the ministers are the AI.

Those ministers are only as aware as your knowledge.

They have no understanding. They have no will. A group of soulless yes-men with a high level of reasoning ability.

AI agents treat your words as final, no matter how intelligent they are. Your intention is final. If you ask an agent questions or tell it your hesitation, it will certainly address it.

But can you always talk it out or express it in words? Words that carry your intentions to the AI reliably?

Are you aware when you are handing decisions to AI that you should absolutely not delegate?

Agents are powerful only when your expectations and thinking are powerful. When you let agents work with restraint, rather than letting them do all the work.

To work with a powerful agent, you need awareness of your own limitations and strengths.

Working with powerful agents, you need to carry your intention in words that an AI responds to most reliably.

I notice things about how we think. And I write about it. Subscribe to not miss the next one.

Comments

built using sutrena